PromptOps

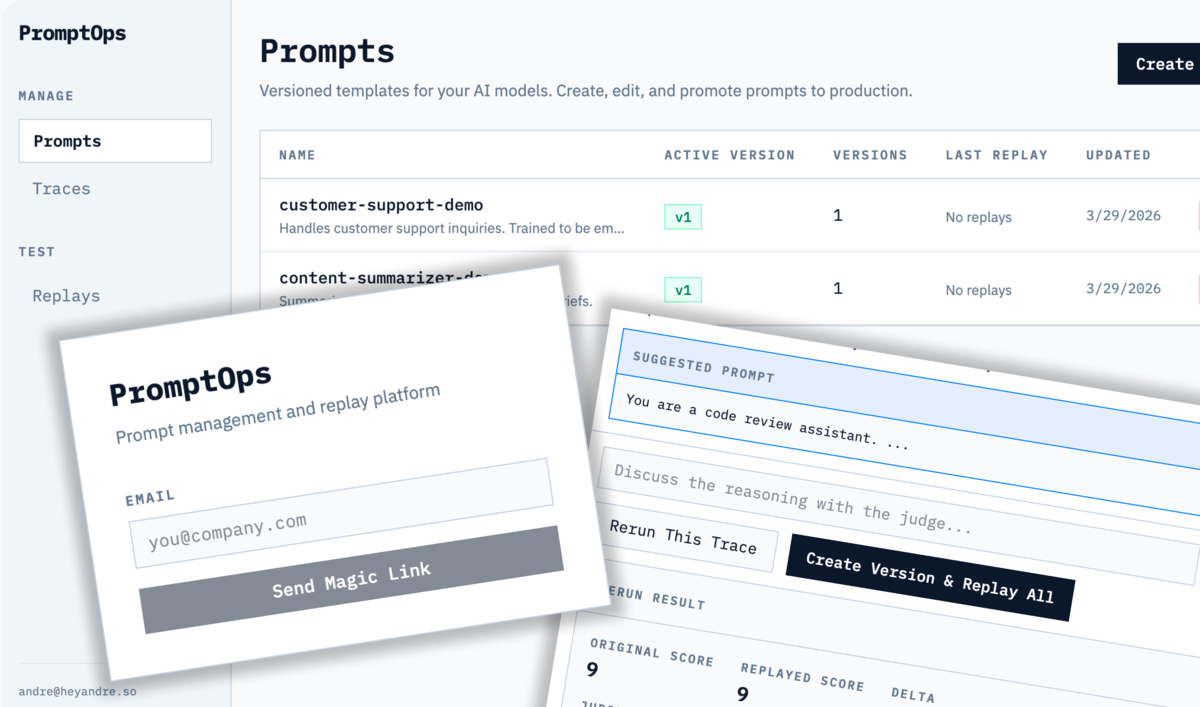

PromptOps is a prompt management and evaluation platform for teams shipping AI products. It versions prompts, generates AI test scenarios, replays real traces against new versions, and uses an LLM-as-judge to score quality and flag regressions before changes reach production.

Problem

Changing prompts in production is risky. Small tweaks that fix one edge case break others. Teams need a way to version, test, and compare prompt changes before shipping them, without building custom eval infrastructure.

Approach

Built a version, generate scenarios, replay, compare, promote workflow. Users version their prompts, generate test scenarios with AI, replay real traces against new versions, and compare results with an LLM-as-judge that scores quality and flags regressions.

Key Features

- AI-generated test scenarios from natural language descriptions

- Time-travel replay: same inputs through new prompts

- LLM-as-judge with randomized A/B position to prevent bias

- Interactive judge chat for improvement suggestions

- Magic link auth, multi-user workspaces

- API keys for programmatic integration

Outcomes

- End-to-end workflow ships prompt changes with eval gates instead of vibes

- Randomized A/B judge position eliminates left-side bias common in LLM-as-judge setups

- 5-service Docker Compose lets contributors run the full stack locally in one command

Architecture

Frontend (Next.js on Vercel) connects to a FastAPI backend (Railway) backed by PostgreSQL for persistence and Redis with Celery for async scenario generation and replay. 5-service Docker Compose for local development.